In Collective Choice: Rating Systems I discuss ratings scales of various sorts, from eBay’s 3-point scale to RPGnet’s double 5-point scale, and BoardGame Geek’s 10-point scale.

Of the various ratings scales, 5-point scales are probably the most common on the Internet. You can find them not just in my own RPGnet, but also on Amazon, Netflix, and iTunes, as well as many other sites and services. Unfortunately 5-point rating scales also face many challenges in their use, and different studies suggest different flaws with this particular methodology.

First, one study using Amazon data has shown that many undetailed ratings (where the rater isn’t required to add any additional information other than the rating they select) show a bimodal distribution. In other words the distribution of ratings tends to cluster around two different numbers (e.g., 1 and 5) rather than offering a normal distribution where the ratings cluster around a single height (e.g., 3). Thus the median of these ratings is not an accurate reflection of product quality, but instead is a statement of conflicting opinions.

Second, our own study using RPGnet data has shown that many detailed ratings (where the rater does add additional information, in this case a full review) offer normal distributions, however it is biased toward the high end of the scale. On RPGnet, for example, we discovered that 90% of this 5-point rating system was 3 or higher with an average around 4.

Randy Farmer of Yahoo suggests that this scale limitation is particularly troublesome for fan-based ratings, such as those found on episodic TV sites:

Only the fans of a show evaluate the episodes, and being fans, will never rate an episode one or two stars, ever. I’ve seen this attempted over and over on the net with the same results every time: Each episode of a show is 4-stars +/- .5 stars. This goes all the way back to the Babylon-5 website, probably the first source for this kind of data.

(And indeed, the TV episode TKO, from Babylon 5’s first season, is considered an entirely atrocious episode by even the fans. Yet it has a 6.1 of 10 “Fair” rating on tv.com.)

Thus even when a bimodal distribution is not a problem, on a 5-point scale the upward bias often results in only 2 or 3 meaningful data points. This is problematic because it minimizes differentiation. In many cases, a 5-star rating system where most of the ratings are either 3 or 4 is actually no better then just a thumbs-up/thumbs-down rating system.

However, given that 5-point scales are probably here to stay, we are forced to make the best use of them we can.

First, we need to provide raters with incentives, so that they provide meaningful ratings. We’ve already seen that this can be done by requesting detailed ratings: when a person takes the time to write text, and knows that his name will be attached to it, he generally does a better job in his rating. There are other possible incentives techniques as well, such as RPGnet’s new XP System.

Second, we need to provide means for a 5-point scale to become more meaningful by encouraging raters to use not just the top half of the scale, but the bottom half as well. One method to accomplish this is to make ratings distinct -- as I briefly mentioned in my previous article on this topic – and encourage standards so that an “average” rating is 2 or 3, not 4.

As an example of how to accomplish both of these goals with already existing 5-point rating scales, I’ve detailed my own experiences with using ratings on two popular services – iTunes and Amazon. By providing myself with incentives and making my use of ratings very distinctive, I have created more meaningful and useful output for myself.

Music Ratings - iTunes

Apple’s iTunes software offers you the ability to rate individual songs with a 0-5 Star rating. If you use iTunes with an iPod, you can change the rating of a song on your iPod and the change will be reflected in your iTunes database the next time you sync your iPod. The “Shuffle Songs” feature available on more modern iPods has an option to have songs with higher ratings be played more often. A very powerful feature, Smart Playlists, can dynamically create sophisticated playlists based on ratings. All of this makes rating music on iTunes very useful.

After Shannon and I wrote our Rating Systems article, I examined the ratings in my iTunes catalog. Using the Alastair’s fabulous XLST iTunes rating statistics tool, I discovered that the ratings I created in iTunes clearly were biased overly high, matching the pattern we’d described. I had far too many songs rated with 4 Stars, and almost nothing rated 1 or 2. This made my ratings less useful.

| Here are some statistics from your iTunes Library: 4172 tracks, 412 (10%) rated | |||||

| Cumulative % of Rated | |||||

| Number | % of rated | Actual | Target | Shortfall | |

| Tracks rated 5 stars: | 112 | 27 | 27 | 5 | -22 |

| Tracks rated 4 stars: | 183 | 44 | 72 | 15 | -57 |

| Tracks rated 3 stars: | 92 | 22 | 94 | 50 | -44 |

| Tracks rated 2 stars: | 22 | 5 | 99 | 90 | -9 |

| Tracks rated 1 stars: | 3 | 1 | 100 |

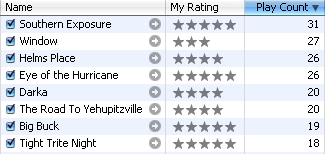

So over the last few months I’ve completely revamped my iTunes ratings. Since I can’t change the user interface, I’ve changed my behavior. I’m also taking advantage of two other fields: “checked” which I use to give more distinctiveness to my ratings, and “play count” which shows whether or not I’ve listened to something through to the end.

Here are the criteria I used:

Rated 5 - Exemplars  : Only my most favorite songs are rated 5. They have to meet the following criteria: they make me feel good or excite me no matter how often I listen to them, I can typically listen to them often without getting tired of them, and they are the best of their particular genre.

: Only my most favorite songs are rated 5. They have to meet the following criteria: they make me feel good or excite me no matter how often I listen to them, I can typically listen to them often without getting tired of them, and they are the best of their particular genre.

Rated 4 - Great  : There is only a small difference between a song that is rated 4 and 5 in my ratings – typically it doesn’t excite me or make me smile quite as much, or it isn’t necessarily an exemplar of its genre. However, I still can typically listen to them often without getting tired of them. Items that are rated 4 and 5 are ones that I carry on my iPod Shuffle.

: There is only a small difference between a song that is rated 4 and 5 in my ratings – typically it doesn’t excite me or make me smile quite as much, or it isn’t necessarily an exemplar of its genre. However, I still can typically listen to them often without getting tired of them. Items that are rated 4 and 5 are ones that I carry on my iPod Shuffle.

Rated 4 - Great (Unchecked)  : There are a few songs that I do consider to be great, but that I only want to play when I’m in the mood for them, or I want to only play in a specific order, or they “don’t play well” with other music. For instance I love the song “The Highwayman” by Loreena McKennitt, however, it is over 10 minutes long and I just don’t want to hear that type of song unless I’m in the mood for it. Other examples are the 12 songs that make up Mussorgsky’s “Pictures at an Exhibition” – I want them played in order when I do play them, and I really don’t want them played in the middle of my other songs. Unfortunately, iTunes does not let you select only unchecked items, so I don’t have a Smart Playlist for these; instead I keep them in a regular playlist.

: There are a few songs that I do consider to be great, but that I only want to play when I’m in the mood for them, or I want to only play in a specific order, or they “don’t play well” with other music. For instance I love the song “The Highwayman” by Loreena McKennitt, however, it is over 10 minutes long and I just don’t want to hear that type of song unless I’m in the mood for it. Other examples are the 12 songs that make up Mussorgsky’s “Pictures at an Exhibition” – I want them played in order when I do play them, and I really don’t want them played in the middle of my other songs. Unfortunately, iTunes does not let you select only unchecked items, so I don’t have a Smart Playlist for these; instead I keep them in a regular playlist.

Rated 3 - Good  : These are songs I like. Typically I can play them regularly but not too often. Songs rated 3-5 go on my iPod Nano.

: These are songs I like. Typically I can play them regularly but not too often. Songs rated 3-5 go on my iPod Nano.

Rated 3 - Good (Unchecked)  : There is a lot of music that I think is Good, but I don’t want to play all the time. I have a large catalog of sound tracks from movies. All but a few of those tracks are in this category. Again, iTunes does not let you select only unchecked items in a Smart Playlist, so I have several regular playlists for these items.

: There is a lot of music that I think is Good, but I don’t want to play all the time. I have a large catalog of sound tracks from movies. All but a few of those tracks are in this category. Again, iTunes does not let you select only unchecked items in a Smart Playlist, so I have several regular playlists for these items.

Rated 2 - Ok  : I have very diverse musical tastes, starting with jazz, various ethnic and world music, and also including quite a bit of pop, rap, R&B, punk, and metal that I enjoy. I don’t enjoy them all the time – but I do like them to pop up every once in a while for variety. So I rate these 2 and leave them checked. I have an old 40GB iPod that I take on long trips, and it stores everything I have that is checked and rated 2-5.

: I have very diverse musical tastes, starting with jazz, various ethnic and world music, and also including quite a bit of pop, rap, R&B, punk, and metal that I enjoy. I don’t enjoy them all the time – but I do like them to pop up every once in a while for variety. So I rate these 2 and leave them checked. I have an old 40GB iPod that I take on long trips, and it stores everything I have that is checked and rated 2-5.

Rated 2 - Ok (Unchecked)  : Some songs are OK, but I really have to be in the mood specifically for that song. Listening to Jimmy Buffet’s “Margaritaville” can be a guilty pleasure on a lazy summer day at the beach, but it isn’t something I want to regularly listen to. I have a number of special playlists for songs rated like this.

: Some songs are OK, but I really have to be in the mood specifically for that song. Listening to Jimmy Buffet’s “Margaritaville” can be a guilty pleasure on a lazy summer day at the beach, but it isn’t something I want to regularly listen to. I have a number of special playlists for songs rated like this.

Rated 1 - Don’t Like  : These are the songs that I don’t like. They’re just not my style. Many are still quality music, they just doesn’t work for me. I do keep most of these for completeness – it might just be one or two songs on the album, and I want to keep the album complete. Or I keep it in case my tastes change. But in general, once something is rate 1 Star, I’ll probably never listen to it again.

: These are the songs that I don’t like. They’re just not my style. Many are still quality music, they just doesn’t work for me. I do keep most of these for completeness – it might just be one or two songs on the album, and I want to keep the album complete. Or I keep it in case my tastes change. But in general, once something is rate 1 Star, I’ll probably never listen to it again.

Rated 1 - Trash (Unchecked)  : These are songs that not only do I not like, they just are not good music. I don’t like most rap music, but I can tell that most are still quality. Some are junk – these I rate 1 and uncheck, and are candidates for deletion the next time I purge my collection.

: These are songs that not only do I not like, they just are not good music. I don’t like most rap music, but I can tell that most are still quality. Some are junk – these I rate 1 and uncheck, and are candidates for deletion the next time I purge my collection.

Unrated & Listened  , playcount > 0: If I’ve listened to something through to the end, but haven’t rated it yet, it shows up in this Smart Playlist. Periodically I check this Smart Playlist, sort by playcount, and try to rate everything that I’ve listened to more then once.

, playcount > 0: If I’ve listened to something through to the end, but haven’t rated it yet, it shows up in this Smart Playlist. Periodically I check this Smart Playlist, sort by playcount, and try to rate everything that I’ve listened to more then once.

Unrated & Unlistened  , play count=0: This is the default when a new song is added to my library. So any song that is unrated, checked, and has a play count of 0 shows up in my “Unrated & Unlistened” Smart Playlist. When I’m in the mood for variety, I go through this playlist and rate songs.

, play count=0: This is the default when a new song is added to my library. So any song that is unrated, checked, and has a play count of 0 shows up in my “Unrated & Unlistened” Smart Playlist. When I’m in the mood for variety, I go through this playlist and rate songs.

Modifying my rating system in this way has caused my average rating for music to change from around 4 to somewhere between 2 and 3. It will probably, over time, become closer to 2 as I rate more of my collection. This gives me a lot of distinctiveness so that I can create Smart Playlists that work well for me.

| Here are some statistics from your iTunes Library: 6519 tracks, 726 (11%) rated | |||||

| Cumulative % of Rated | |||||

| Number | % of rated | Actual | Target | Shortfall | |

| Tracks rated 5 stars: | 74 | 10 | 10 | 5 | -5 |

| Tracks rated 4 stars: | 144 | 20 | 30 | 15 | -15 |

| Tracks rated 3 stars: | 211 | 29 | 59 | 50 | -9 |

| Tracks rated 2 stars: | 270 | 37 | 96 | 90 | -6 |

| Tracks rated 1 stars: | 27 | 4 | 100 |

Obviously rating a large music collection can become a chore – you don’t want to spend your limited music listening time always fine tuning your ratings. So I have some approaches that make it easier for me to rate my music with less effort:

- First, I sorted my catalog by my old ratings, and modified everything down by 1, Starting with everything rated 2 becoming 1, 3 becoming 2, etc. This gave me a good base to start with

- Next I created Smart Playlists for each rating, i.e. “Rating 5 - Exemplar” with “Match only checked songs” and “Live updating” checked. I then added “Play Count” as a column to my view, and sorted by it. This gave me the songs that I played the most and least, and I adjusted some songs up and down accordingly.

-

Then I created a new Smart Playlist that simply plays songs rated 3 to 5, limiting the list to the first 100 GB selected by random (i.e. everything random), and saved this Smart Playlist as “Plays Well With Others”. I play this on occasion in the background, and when I hear something that jars me I know something isn’t rated right. Thus without a lot of effort I can change ratings for songs that no longer fit their rating, or uncheck items where the rating was appropriate but it “didn’t play well with others”.

-

I try to be aware when I’m using my iPod of what a songs rating is, and change it if it seems wrong. The next time I sync the iPod my ratings will be adjusted in my iTunes catalog.

- I also try to be aware of Play Count – this number only goes up if you play a song to the end. So even if I’m not able to take a look at the rating (for instance when I’m in a car), I can at least forward to the next song. Periodically I review the play counts for songs that I’ve rated and consider moving them up and down accordingly. Of course, this means that I have to be careful and not let the iPod keep running when I’m not listening.

A tip for those of you that do put a lot of effort into your iTunes ratings: I’ve learned the hard way that unlike most song information, the rating is NOT stored in the song itself, so if your iTunes database gets corrupted, or you move your music to another server, you’ll lose all your ratings. One way to avoid this is to periodically backup your ratings into a field that is stored in the song itself. I personally use the “Grouping” field as it is rarely used, select all songs with the same rating and click on “Get Info”, and change the Grouping field to “My Rating: 5 Stars”.

I only have 11% of my collection rated so far, but using this system I’m finding it a lot easier to manage my ratings. I’m already getting many benefits from it – I’m playing my music more often, my iPods typically have the music I want on them, and various music discovery services can use my ratings to help me identify new music I might enjoy. This provides the incentive to keep me entering meaningful ratings.

Book Ratings - Amazon

Amazon also uses a 5-Star rating system, and your ratings can be used by Amazon to help you find books that you might like. Though I like to support my local bookstores, it is this feature that brings me back to Amazon time and again. Whenever I browse through Amazon and see a book I’ve already read I try to take the time to update my rating.

Amazon has a number of different tools to assist you in your ratings. If you are an Amazon customer, you can go to Improve Your Recommendations: Edit Items You Own and see all the books that you’ve purchased and quickly rate them with a nice AJAX interface. You can also review items that you’ve already rated, whether or not you own them, at Improve Your Recommendations: Edit Items You’ve Rated.

Amazon has also recently added a very nice web service called Your Media Library that can be used to help manage your media library of books, music, and dvds. I personally only have used it to manage my books and dvds, as I find rating albums useless – it is songs that I prefer to rate.

Amazon has also recently added a very nice web service called Your Media Library that can be used to help manage your media library of books, music, and dvds. I personally only have used it to manage my books and dvds, as I find rating albums useless – it is songs that I prefer to rate.

After browsing through my ratings to date, I discovered the same flaws I found iTunes – my ratings typically were too high; most were a 4. This is particularly encouraged by the popup when your cursor is over the Stars “1 - I hate it, 2 - I don’t like it, 3 - It’s Ok, 4 - I like it, and 5 - I love it”. I suspect if I use the same trick that I use for iTunes of making a rating of 2 Stars mean “Ok” I could potentially cause the recommendation engine to be less effective (though it could possibly make it better, I don’t know). So I am being much more brutal with my ratings and pushing many more down to 3, so that my ratings of 4 and 5 have more meaning.

5 Stars  : These have to be the exemplars – the best books I’ve ever read, would be glad to read again, would be proud to show off on my best bookshelf, and will buy extra copies to give to friends.

: These have to be the exemplars – the best books I’ve ever read, would be glad to read again, would be proud to show off on my best bookshelf, and will buy extra copies to give to friends.

4 Stars  : These have to be really good books – most of them I’m willing to read again and I promote them by offering to loan them to my more discriminating friends. Although I may keep them on my bookshelf I’d rather give them to a friend then sell them at a used book store.

: These have to be really good books – most of them I’m willing to read again and I promote them by offering to loan them to my more discriminating friends. Although I may keep them on my bookshelf I’d rather give them to a friend then sell them at a used book store.

3 Stars  : These are books are decent books, and I do share them with my voracious reader friends. But I don’t push them and I’m much more likely to sell them at a used bookstore then keep them on my shelf. This is the rating that I significantly underused previously, and I’m finding that the key discriminator for me so far is how much I feel like recommending this to friends who are more discriminating readers.

: These are books are decent books, and I do share them with my voracious reader friends. But I don’t push them and I’m much more likely to sell them at a used bookstore then keep them on my shelf. This is the rating that I significantly underused previously, and I’m finding that the key discriminator for me so far is how much I feel like recommending this to friends who are more discriminating readers.

2 Stars  : This rating is where the Amazon rating system fails the most – these are suppost to be books that “I don’t like”, however, most of the time I don’t buy books that I probably wouldn’t like, much less read them, so I have very few in this category. However, I’ve decided this category is for books that are just not quite good enough, or are slightly disappointing. Not bad, or disliked, but just somewhat disappointing.

: This rating is where the Amazon rating system fails the most – these are suppost to be books that “I don’t like”, however, most of the time I don’t buy books that I probably wouldn’t like, much less read them, so I have very few in this category. However, I’ve decided this category is for books that are just not quite good enough, or are slightly disappointing. Not bad, or disliked, but just somewhat disappointing.

1 Stars  : This is where I put the books that I don’t like, or worse, I hate. Not many here, but I’m willing to risk more then many people are so I have some. Also books go here that just don’t fit my interest, like romance novels that get recommended to me because I like some crossover fantasy-romance authors.

: This is where I put the books that I don’t like, or worse, I hate. Not many here, but I’m willing to risk more then many people are so I have some. Also books go here that just don’t fit my interest, like romance novels that get recommended to me because I like some crossover fantasy-romance authors.

Since I started more accurately rating my books at Amazon, I’ve found that their suggestions for other books to read to be more accurate. Thus I am getting value from rating these books, and I have incentive to continue to make the effort.

Conclusion

Offering an incentive for people to rate is important for ratings of all sorts, with both individual gain and status recognition being powerful motivators.

However the easiest technique for making a 5-point rating scale more useful is to make it “distinct”. If a user has a more specific meaning for each rating, ratings will slowly settle toward a truer average, and thus more of each rating scale will be used. We’ve also tried this technique recently on RPGnet, with our new Gaming Index; and thus far our new 10-point scale – which has distinct meanings for each number – is averaging 7.27. That’s still a fair amount above the real average of 5.5, but at least it’s below the 8+ rating that our old double 5-point scale resulted in.

Often you, as a consumer of rating systems, will be making use of rating scales designed by others, rather than those you’re designing yourself. For those cases it often makes sense to design your own rules for what each number means, and to do so in such a way that your median is the average of the scale, rather than toward one of the extremes. When you do, even if you’re using a tight 5-point scale you’ll end up with enough differentiation for it to actually be more meaningful than a thumbs up or a thumbs down.

Related articles from this blog:

Related articles from Shannon Appelcline’s Trials, Triumphs & Trivialities:

Comments

See summary (or click my name below) for a summary of all the votes for the B5 Series. Averaging all episodes you get a mean score of 8.19 with a standard deviation of 0.84 - a *very* narrow range. Context and Motivation matter more than scale in getting reusable scores out of rating systems. As I like to tell the folks here at Yahoo! – the person creating the rating has to get something out of the transaction other than just altruism.

F. Randall Farmer 2006-08-11T09:49:28-07:00

I thought the issue, like Randy Farmer suggests, was that only positive discrete scales always tend to their maximum. The problem then in rating systems, like you mentioned I think earlier, is that readers have to interpret what the real norm and limits are. Even if every rating is in the upper quartile. You may want to check out what I did in Playerep (http://www.playerep.com), where the rating system is based on election but not a fixed scale (always norming to zero without influence). FWIW. Thanks. Adam

Adam MacDonald 2006-08-11T10:21:24-07:00

URL: I like your classification scheme for iTunes. I’m going to have to work on adapting my scale (which is definitely showing signs of skewing towards the high end). One thing, though. You mention that you can’t create smart playlists to show unchecked songs. It’s true that you can’t do it with only one playlist, but you can do so with 2: #1) Any playlist (for example, let’s say your smart playlist for 4 star, checked songs, so you have it set (I assume) for My Rating = 4, Match Only Checked Songs = checked) #2) Use these criteria: My Rating = 4, Playlist Is Not . Leave match only checked songs unchecked, and enable live updating and you have a smart playlist that shows you your unchecked 4-star songs.

Andy Tinkham 2006-08-16T13:55:43-07:00

URL: »Unfortunately, iTunes does not let you select only unchecked items, so I don’t have a Smart Playlist for these; instead I keep them in a regular playlist. You can: Make a smart playlist called ‘Checked’ with a rule that is always true (e.g. artist does not contain (leave the field emtpy), or bitrate > 0). Create a smart playlist called ‘Unchecked’ with the rule: ‘Playlist is not Checked’. There you have all your unchecked files. From there you can make smart ones that use this Unchecked playlist. I like your rating system. I have 40% on a 3star now, and around 25% for 2 and 4 stars. I might scale it down a little bit more. hmm, after typing this I read the comments and see Adam McDonald already mentioned something similar about the unchecked items. I’ll leave it here anyway…

Tino 2006-10-16T11:52:32-07:00

Christopher, I think some, if not all, of the issues with the 5-point rating system could be addressed with a better UI. For example, displaying a normal distribution curve skewed by accumulated rating to nudge raters away from the extremes. Of course, this doesn’t work well if sample size is too small which usually happens on the far end of the long tail.

Don Park 2006-12-17T18:25:50-07:00

URL: Thanks for writing this article. I found another view on 5 point music rating other than my own informational.

Jonathan 2008-02-06T00:33:36-07:00